Overview¶

PySPH is an open source framework for Smoothed Particle Hydrodynamics (SPH) simulations. It is implemented in Python and the performance critical parts are implemented in Cython and PyOpenCL.

PySPH is implemented in a way that allows a user to specify the entire SPH simulation in pure Python. High-performance code is generated from this high-level Python code, compiled on the fly and executed. PySPH can use OpenMP to utilize multi-core CPUs effectively. PySPH can work with OpenCL and use your GPGPUs. PySPH also features optional automatic parallelization (multi-CPU) using mpi4py and Zoltan. If you wish to use the parallel capabilities you will need to have these installed.

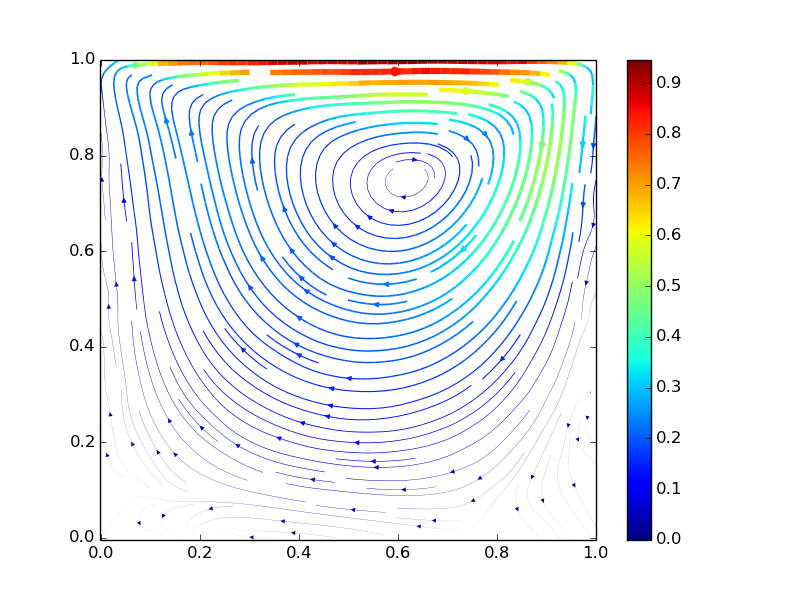

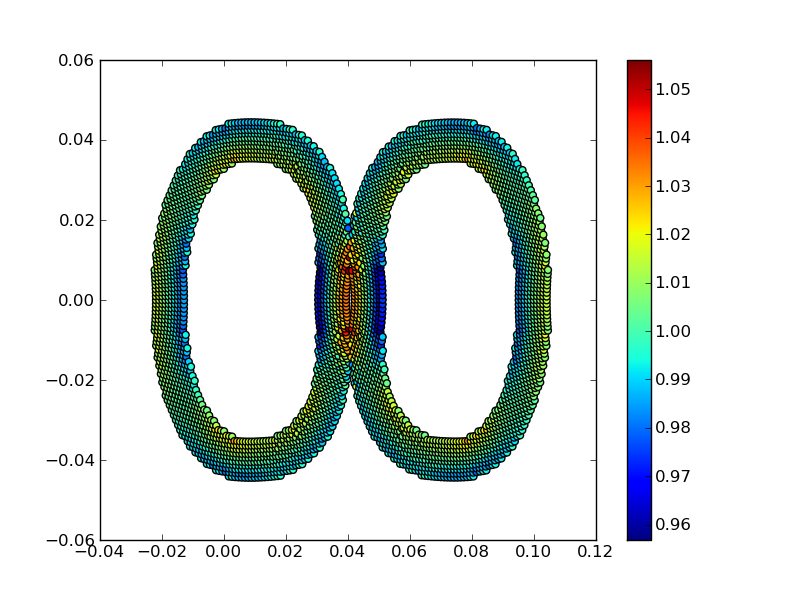

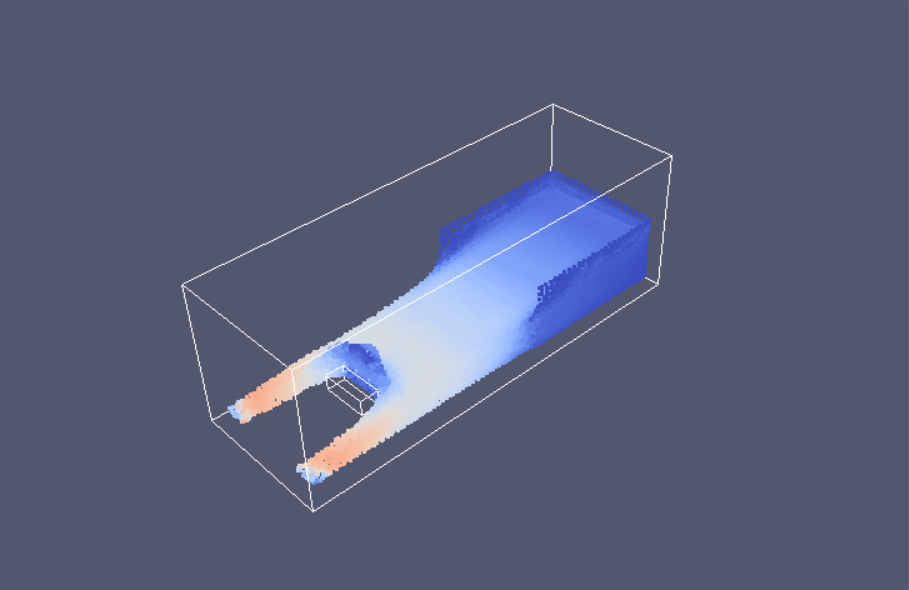

Here are videos of simulations made with PySPH.

PySPH is hosted on github. Please see the site for development details.

Features¶

- User scripts and equations are written in pure Python.

- Flexibility to define arbitrary SPH equations operating on particles.

- Ability to define your own multi-step integrators in pure Python.

- High-performance: our performance is comparable to hand-written solvers implemented in FORTRAN.

- Seamless multi-core support with OpenMP.

- Seamless GPU support with PyOpenCL.

- Seamless parallel integration using Zoltan.

- BSD license.

SPH formulations¶

Currently, PySPH has numerous examples to solve the viscous, incompressible Navier-Stokes equations using the weakly compressible (WCSPH) approach. The following formulations are currently implemented:

- Weakly Compressible SPH (WCSPH) for free-surface flows (Gesteira et al. 2010, Journal of Hydraulic Research, 48, pp. 6–27)

3D dam-break past an obstacle SPHERIC benchmark Test 2

- Transport Velocity Formulation for incompressilbe fluids (Adami et al. 2013, JCP, 241, pp. 292–307).

- SPH for elastic dynamics (Gray et al. 2001, CMAME, Vol. 190, pp 6641–6662)

- Compressible SPH (Puri et al. 2014, JCP, Vol. 256, pp 308–333)

- Generalized Transport Velocity Formulation (GTVF) (Zhang et al. 2017, JCP, 337, pp. 216–232)

- Entropically Damped Artificial Compressibility (EDAC) (Ramachandran et al. 2019, Computers and Fluids, 179, pp. 579–594)

- delta-SPH (Marrone et al. CMAME, 2011, 200, pp. 1526–1542)

- Dual Time SPH (DTSPH) (Ramachandran et al. arXiv preprint)

- Incompressible (ISPH) (Cummins et al. JCP, 1999, 152, pp. 584–607)

- Simple Iterative SPH (SISPH) (Muta et al. arXiv preprint)

- Implicit Incompressibel SPH (IISPH) (Ihmsen et al. 2014, IEEE Trans. Vis. Comput. Graph., 20, pp 426–435)

- Gudnov SPH (GSPH) (Inutsuka et al. JCP, 2002, 179, pp. 238–267)

- Conservative Reproducible Kernel SPH (CRKSPH) (Frontiere et al. JCP, 2017, 332, pp. 160–209)

- Approximate Gudnov SPH (AGSPH) (Puri et al. JCP, 2014, pp. 432–458)

- Adaptive Density Kernel Estimate (ADKE) (Sigalotti et al. JCP, 2006, pp. 124–149)

- Akinci (Akinci et al. ACM Trans. Graph., 2012, pp. 62:1–62:8)

Boundary conditions from the following papers are implemented:

- Generalized Wall BCs (Adami et al. JCP, 2012, pp. 7057–7075)

- Do nothing type outlet BC (Federico et al. European Journal of Mechanics - B/Fluids, 2012, pp. 35–46)

- Outlet Mirror BC (Tafuni et al. CMAME, 2018, pp. 604–624)

- Method of Characteristics BC (Lastiwaka et al. International Journal for Numerical Methods in Fluids, 2012, pp. 35–46)

- Hybrid BC (Negi et al. arXiv preprint)

Corrections proposed in the following papers are also the part for PySPH:

- Corrected SPH (Bonet et al. CMAME, 1999, pp. 97–115)

- hg-correction (Hughes et al. Journal of Hydraulic Research, pp. 105–117)

- Tensile instability correction’ (Monaghan J. J. JCP, 2000, pp. 2990–311)

- Particle shift algorithms (Xu et al. JCP, 2009, pp. 6703–6725), (Skillen et al. CMAME, 2013, pp. 163–173)

Surface tension models are implemented from:

- Morris surface tension (Morris et al. Internaltional Journal for Numerical Methods in Fluids, 2000, pp. 333–353)

- Adami Surface tension formulation (Adami et al. JCP, 2010, pp. 5011–5021)

Credits¶

PySPH is primarily developed at the Department of Aerospace Engineering, IIT Bombay. We are grateful to IIT Bombay for the support. Our primary goal is to build a powerful SPH-based tool for both application and research. We hope that this makes it easy to perform reproducible computational research.

To see the list of contributors the see github contributors page

Some earlier developers not listed on the above are:

- Pankaj Pandey (stress solver and improved load balancing, 2011)

- Chandrashekhar Kaushik (original parallel and serial implementation in 2009)

Research papers using PySPH¶

The following are some of the works that use PySPH,

- Adaptive SPH method: https://gitlab.com/pypr/adaptive_sph

- Adaptive SPH method applied to moving bodies: https://gitlab.com/pypr/asph_motion

- Convergence of the SPH method: https://gitlab.com/pypr/convergence_sph

- Corrected transport velocity formulation: https://gitlab.com/pypr/ctvf

- Dual-Time SPH method: https://gitlab.com/pypr/dtsph

- Entropically damped artificial compressibility SPH formulation: https://gitlab.com/pypr/edac_sph

- Generalized inlet and outlet boundary conditions for SPH: https://gitlab.com/pypr/inlet_outlet

- Method of manufactured solutions for SPH: https://gitlab.com/pypr/mms_sph

- A demonstration of the binder support provided by PySPH: https://gitlab.com/pypr/pysph_demo

- Manuscript and code for a paper on PySPH: https://gitlab.com/pypr/pysph_paper

- Simple Iterative Incompressible SPH scheme: https://gitlab.com/pypr/sisph

- Geometry generation and preprocessing for SPH simulations: https://gitlab.com/pypr/sph_geom

Citing PySPH¶

You may use the following article to formally refer to PySPH, a freely-available arXiv copy of the below paper is at https://arxiv.org/abs/1909.04504,

- Prabhu Ramachandran, Aditya Bhosale, Kunal Puri, Pawan Negi, Abhinav Muta, A. Dinesh, Dileep Menon, Rahul Govind, Suraj Sanka, Amal S Sebastian, Ananyo Sen, Rohan Kaushik, Anshuman Kumar, Vikas Kurapati, Mrinalgouda Patil, Deep Tavker, Pankaj Pandey, Chandrashekhar Kaushik, Arkopal Dutt, Arpit Agarwal. “PySPH: A Python-Based Framework for Smoothed Particle Hydrodynamics”. ACM Transactions on Mathematical Software 47, no. 4 (31 December 2021): 1–38. DOI: https://doi.org/10.1145/3460773.

The bibtex entry is::

@article{ramachandran2021a,

title = {{{PySPH}}: {{A Python-based Framework}} for {{Smoothed Particle Hydrodynamics}}},

shorttitle = {{{PySPH}}},

author = {Ramachandran, Prabhu and Bhosale, Aditya and Puri,

Kunal and Negi, Pawan and Muta, Abhinav and Dinesh,

A. and Menon, Dileep and Govind, Rahul and Sanka, Suraj and Sebastian,

Amal S. and Sen, Ananyo and Kaushik, Rohan and Kumar,

Anshuman and Kurapati, Vikas and Patil, Mrinalgouda and Tavker,

Deep and Pandey, Pankaj and Kaushik, Chandrashekhar and Dutt,

Arkopal and Agarwal, Arpit},

year = {2021},

month = dec,

journal = {ACM Transactions on Mathematical Software},

volume = {47},

number = {4},

pages = {1--38},

issn = {0098-3500, 1557-7295},

doi = {10.1145/3460773},

langid = {english}

}

The following are older presentations:

- Prabhu Ramachandran, PySPH: a reproducible and high-performance framework for smoothed particle hydrodynamics, In Proceedings of the 15th Python in Science Conference, pages 127–135, July 11th to 17th, 2016. Link to paper.

- Prabhu Ramachandran and Kunal Puri, PySPH: A framework for parallel particle simulations, In proceedings of the 3rd International Conference on Particle-Based Methods (Particles 2013), Stuttgart, Germany, 18th September 2013.

History¶

- 2009: PySPH started with a simple Cython based 1D implementation written by Prabhu.

- 2009-2010: Chandrashekhar Kaushik worked on a full 3D SPH implementation with a more general purpose design. The implementation was in a mix of Cython and Python.

- 2010-2012: The previous implementation was a little too complex and was largely overhauled by Kunal and Pankaj. This became the PySPH 0.9beta release. The difficulty with this version was that it was almost entirely written in Cython, making it hard to extend or add new formulations without writing more Cython code. Doing this was difficult and not too pleasant. In addition it was not as fast as we would have liked it. It ended up feeling like we might as well have implemented it all in C++ and exposed a Python interface to that.

- 2011-2012: Kunal also implemented SPH2D and another internal version called ZSPH in Cython which included Zoltan based parallelization using PyZoltan. This was specific to his PhD research and again required writing Cython making it difficult for the average user to extend.

- 2013-present In early 2013, Prabhu reimplemented the core of PySPH to be almost entirely auto-generated from pure Python. The resulting code was faster than previous implementations and very easy to extend entirely from pure Python. Kunal and Prabhu integrated PyZoltan into PySPH and the current version of PySPH was born. Subsequently, OpenMP support was also added in 2015.

Support¶

If you have any questions or are running into any difficulties with PySPH you can use the PySPH discussions to ask questions or look for answers.

Please also take a look at the PySPH issue tracker if you have bugs or issues to report.

You could also email or post your questions on the pysph-users mailing list here: https://groups.google.com/d/forum/pysph-users

Changelog¶

1.0b2¶

- Release date: Still under development.

1.0b1¶

Around 140 pull requests were merged. Thanks to all who contributed to this release (in alphabetical order): Abhinav Muta, Aditya Bhosale, Amal Sebastian, Ananyo Sen, Antonio Valentino, Dinesh Adepu, Jeffrey D. Daye, Navaneet, Miloni Atal, Pawan Negi, Prabhu Ramachandran, Rohan Kaushik, Tetsuo Koyama, and Yash Kothari.

- Release date: 1st March 2022.

- Enhancements:

- Use github actions for tests and also test OpenCL support on CI.

- Parallelize the build step of the octree NNPS on the CPU.

- Support for packing initial particle distributions.

- Add support for setting load balancing weights for particle arrays.

- Use meshio to read data and convert them into particles.

- Add support for conditional group of equations.

- Add options to control loop limits in a Group.

- Add

pysph binder,pysph cull, andpysph cache. - Use OpenMP for initialize, loop and post_loop.

- Added many SPH schemes: CRKSPH, SISPH, basic ISPH, SWE, TSPH, PSPH.

- Added a mirror boundary condition along coordinate axes.

- Add support for much improved inlets and outlets.

- Add option

--reorder-freqto turn on spatial reordering of particles. - API: Integrators explicitly call update_domain.

- Basic CUDA support.

- Many important improvements to the pysph Mayavi viewer.

- Many improvements to the 3D and 2D jupyter viewer.

Application.customize_outputcan be used to customize viewer.- Use

~/.compyle/config.pyfor user customizations. - Remove pyzoltan, cyarray, and compyle into their own packages on pypi.

- Bug fixes:

- Fix issue with update_nnps being called too many times when set for a group.

- Many OpenCL related fixes and improvements.

- Fix bugs in the parallel manager code and add profiling information.

- Fix hdf5 compressed output.

- Fix

pysph dump_vtk - Many fixes to various schemes.

- Fix memory leak with the neighbor caching.

- Fix issues with using PySPH on FreeBSD.

1.0a6¶

90 pull requests were merged for this release. Thanks to the following who contributed to this release (in alphabetical order): A Dinesh, Abhinav Muta, Aditya Bhosale, Ananyo Sen, Deep Tavker, Prabhu Ramachandran, Vikas Kurapati, nilsmeyerkit, Rahul Govind, Sanka Suraj.

- Release date: 26th November, 2018.

- Enhancements:

- Initial support for transparently running PySPH on a GPU via OpenCL.

- Changed the API for how adaptive DT is computed, this is now to be set in

the particle array properties called

dt_cfl, dt_force, dt_visc. - Support for non-pairwise particle interactions via the

loop_allmethod. This is useful for MD simulations. - Add support for

py_stage1, py_stage2 ..., methods in the integrator. - Add support for

py_initializeandinitialize_pairin equations. - Support for using different sets of equations for different stages of the integration.

- Support to call arbitrary Python code from a

Groupvia thepre/postcallback arguments. - Pass

t, dtto the reduce method. - Allow particle array properties to have strides, this allows us to define properties with multiple components. For example if you need 3 values per particle, you can set the stride to 3.

- Mayavi viewer can now show non-real particles also if saved in the output.

- Some improvements to the simple remesher of particles.

- Add simple STL importer to import geometries.

- Allow user to specify openmp schedule.

- Better documentation on equations and using a different compiler.

- Print convenient warning when particles are diverging or if

h, mare zero. - Abstract the code generation into a common core which supports Cython, OpenCL and CUDA. This will be pulled into a separate package in the next release.

- New GPU NNPS algorithms including a very fast oct-tree.

- Added several sphysics test cases to the examples.

- Schemes:

- Add a working Implicit Incompressible SPH scheme (of Ihmsen et al., 2014)

- Add GSPH scheme from SPH2D and all the approximate Riemann solvers from there.

- Add code for Shepard and MLS-based density corrections.

- Add kernel corrections proposed by Bonet and Lok (1999)

- Add corrections from the CRKSPH paper (2017).

- Add basic equations of Parshikov (2002) and Zhang, Hu, Adams (2017)

- Bug fixes:

- Ensure that the order of equations is preserved.

- Fix bug with dumping VTK files.

- Fix bug in Adami, Hu, Adams scheme in the continuity equation.

- Fix mistake in WCSPH scheme for solid bodies.

- Fix bug with periodicity along the z-axis.

1.0a5¶

- Release date: 17th September, 2017

- Mayavi viewer now supports empty particle arrays.

- Fix error in scheme chooser which caused problems with default scheme property values.

- Add starcluster support/documentation so PySPH can be easily used on EC2.

- Improve the particle array so it automatically ravel’s the passed arrays and also accepts constant values without needing an array each time.

- Add a few new examples.

- Added 2D and 3D viewers for Jupyter notebooks.

- Add several new Wendland Quintic kernels.

- Add option to measure coverage of Cython code.

- Add EDAC scheme.

- Move project to github.

- Improve documentation and reference section.

- Fix various bugs.

- Switch to using pytest instead of nosetests.

- Add a convenient geometry creation module in

pysph.tools.geometry - Add support to script the viewer with a Python file, see

pysph view -h. - Add several new NNPS schemes like extended spatial hashing, SFC, oct-trees etc.

- Improve Mayavi viewer so one can view the velocity vectors and any other vectors.

- Viewer now has a button to edit the visualization properties easily.

- Add simple tests for all available kernels. Add

SuperGaussiankernel. - Add a basic dockerfile for pysph to help with the CI testing.

- Update build so pysph can be built with a system zoltan installation that is

part of trilinos using the

USE_TRILINOSenvironment variable. - Wrapping the

Zoltan_Comm_Resizefunction inpyzoltan.

1.0a4¶

- Release date: 14th July, 2016.

- Improve many examples to make it easier to make comparisons.

- Many equation parameters no longer have defaults to prevent accidental errors from not specifying important parameters.

- Added support for

Schemeclasses that manage the generation of equations and solvers. A user simply needs to create the particles and setup a scheme with the appropriate parameters to simulate a problem. - Add support to easily handle multiple rigid bodies.

- Add support to dump HDF5 files if h5py is installed.

- Add support to directly dump VTK files using either Mayavi or PyVisfile,

see

pysph dump_vtk - Improved the nearest neighbor code, which gives about 30% increase in performance in 3D.

- Remove the need for the

windows_env.batscript on Windows. This is automatically setup internally. - Add test that checks if all examples run.

- Remove unused command line options and add a

--max-stepsoption to allow a user to run a specified number of iterations. - Added Ghia et al.’s results for lid-driven-cavity flow for easy comparison.

- Added some experimental results for the dam break problem.

- Use argparse instead of optparse as it is deprecated in Python 3.x.

- Add

pysph.tools.automationto facilitate easier automation and reproducibility of PySPH simulations. - Add spatial hash and extended spatial hash NNPS algorithms for comparison.

- Refactor and cleanup the NNPS related code.

- Add several gas-dynamics examples and the

ADEKEScheme. - Work with mpi4py version 2.0.0 and older versions.

- Fixed major bug with TVF implementation and add support for 3D simulations with the TVF.

- Fix bug with uploaded tarballs that breaks

pip install pysphon Windows. - Fix the viewer UI to continue playing files when refresh is pushed.

- Fix bugs with the timestep values dumped in the outputs.

- Fix floating point issues with timesteps, where examples would run a final extremely tiny timestep in order to exactly hit the final time.

1.0a3¶

- Release date: 18th August, 2015.

- Fix bug with

output_at_timesspecification for solver. - Put generated sources and extensions into a platform specific directory in

~/.pysph/sources/<platform-specific-dir>to avoid problems with multiple Python versions, operating systems etc. - Use locking while creating extension modules to prevent problems when multiple processes generate the same extesion.

- Improve the

Applicationclass so users can subclass it to create examples. The users can also add their own command line arguments and add pre/post step/stage callbacks by creating appropriate methods. - Moved examples into the

pysph.examples. This makes the examples reusable and easier to run as installation of pysph will also make the examples available. The examples also perform the post-processing to make them completely self-contained. - Add support to write compressed output.

- Add support to set the kernel from the command line.

- Add a new

pysphscript that supportsview,run, andtestsub-commands. Thepysph_vieweris now removed, usepysph viewinstead. - Add a simple remeshing tool in

pysph.solver.tools.SimpleRemesher. - Cleanup the symmetric eigenvalue computing routines used for solid mechanics problems and allow them to be used with OpenMP.

- The viewer can now view the velocity magnitude (

vmag) even if it is not present in the data. - Port all examples to use new

ApplicationAPI. - Do not display unnecessary compiler warnings when there are no errors but display verbose details when there is an error.

1.0a2¶

- Release date: 12th June, 2015

- Support for tox, this makes it trivial to test PySPH on py26, py27 and py34 (and potentially more if needed).

- Fix bug in code generator where it is unable to import pysph before it is installed.

- Support installation via

pipby allowingegg_infoto be run without cython or numpy. - Added Codeship CI build using tox for py27 and py34.

- CI builds for Python 2.7.x and 3.4.x.

- Support for Python-3.4.x.

- Support for Python-2.6.x.

1.0a1¶

- Release date: 3rd June, 2015.

- First public release of the new PySPH code which uses code-generation and is hosted on bitbucket.

- OpenMP support.

- MPI support using Zoltan.

- Automatic code generation from high-level Python code.

- Support for various multi-step integrators.

- Added an interpolator utility module that interpolates the particle data onto a desired set of points (or grids).

- Support for inlets and outlets.

- Support for basic Gmsh input/output.

- Plenty of examples for various SPH formulations.

- Improved documentation.

- Continuous integration builds on Shippable, Drone.io, and AppVeyor.